点击蓝字 ╳ 关注我们

巴延兴

深圳开鸿数字产业发展有限公司

资深OS框架开发工程师

一、简介

二、目录

audio_framework

├── frameworks

│ ├── js #js 接口

│ │ └── napi

│ │ └── audio_renderer #audio_renderer NAPI接口

│ │ ├── include

│ │ │ ├── audio_renderer_callback_napi.h

│ │ │ ├── renderer_data_request_callback_napi.h

│ │ │ ├── renderer_period_position_callback_napi.h

│ │ │ └── renderer_position_callback_napi.h

│ │ └── src

│ │ ├── audio_renderer_callback_napi.cpp

│ │ ├── audio_renderer_napi.cpp

│ │ ├── renderer_data_request_callback_napi.cpp

│ │ ├── renderer_period_position_callback_napi.cpp

│ │ └── renderer_position_callback_napi.cpp

│ └── native #native 接口

│ └── audiorenderer

│ ├── BUILD.gn

│ ├── include

│ │ ├── audio_renderer_private.h

│ │ └── audio_renderer_proxy_obj.h

│ ├── src

│ │ ├── audio_renderer.cpp

│ │ └── audio_renderer_proxy_obj.cpp

│ └── test

│ └── example

│ └── audio_renderer_test.cpp

├── interfaces

│ ├── inner_api #native实现的接口

│ │ └── native

│ │ └── audiorenderer #audio渲染本地实现的接口定义

│ │ └── include

│ │ └── audio_renderer.h

│ └── kits #js调用的接口

│ └── js

│ └── audio_renderer #audio渲染NAPI接口的定义

│ └── include

│ └── audio_renderer_napi.h

└── services #服务端

└── audio_service

├── BUILD.gn

├── client #IPC调用中的proxy端

│ ├── include

│ │ ├── audio_manager_proxy.h

│ │ ├── audio_service_client.h

│ └── src

│ ├── audio_manager_proxy.cpp

│ ├── audio_service_client.cpp

└── server #IPC调用中的server端

├── include

│ └── audio_server.h

└── src

├── audio_manager_stub.cpp

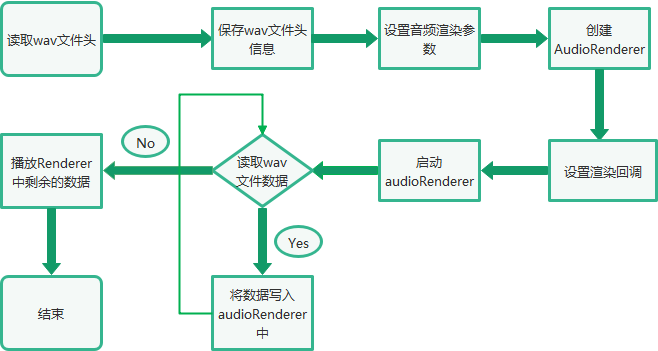

└──audio_server.cpp三、音频渲染总体流程

四、Native接口使用

bool TestPlayback(int argc, char *argv[]) const

{

FILE* wavFile = fopen(path, "rb");

//读取wav文件头信息

size_t bytesRead = fread(&wavHeader, 1, headerSize, wavFile);

//设置AudioRenderer参数

AudioRendererOptions rendererOptions = {};

rendererOptions.streamInfo.encoding = AudioEncodingType::ENCODING_PCM;

rendererOptions.streamInfo.samplingRate = static_cast(wavHeader.SamplesPerSec);

rendererOptions.streamInfo.format = GetSampleFormat(wavHeader.bitsPerSample);

rendererOptions.streamInfo.channels = static_cast(wavHeader.NumOfChan);

rendererOptions.rendererInfo.contentType = contentType;

rendererOptions.rendererInfo.streamUsage = streamUsage;

rendererOptions.rendererInfo.rendererFlags = 0;

//创建AudioRender实例

unique_ptr audioRenderer = AudioRenderer::Create(rendererOptions);

shared_ptr cb1 = make_shared();

//设置音频渲染回调

ret = audioRenderer->SetRendererCallback(cb1);

//InitRender方法主要调用了audioRenderer实例的Start方法,启动音频渲染

if (!InitRender(audioRenderer)) {

AUDIO_ERR_LOG("AudioRendererTest: Init render failed");

fclose(wavFile);

return false;

}

//StartRender方法主要是读取wavFile文件的数据,然后通过调用audioRenderer实例的Write方法进行播放

if (!StartRender(audioRenderer, wavFile)) {

AUDIO_ERR_LOG("AudioRendererTest: Start render failed");

fclose(wavFile);

return false;

}

//停止渲染

if (!audioRenderer->Stop()) {

AUDIO_ERR_LOG("AudioRendererTest: Stop failed");

}

//释放渲染

if (!audioRenderer->Release()) {

AUDIO_ERR_LOG("AudioRendererTest: Release failed");

}

//关闭wavFile

fclose(wavFile);

return true;

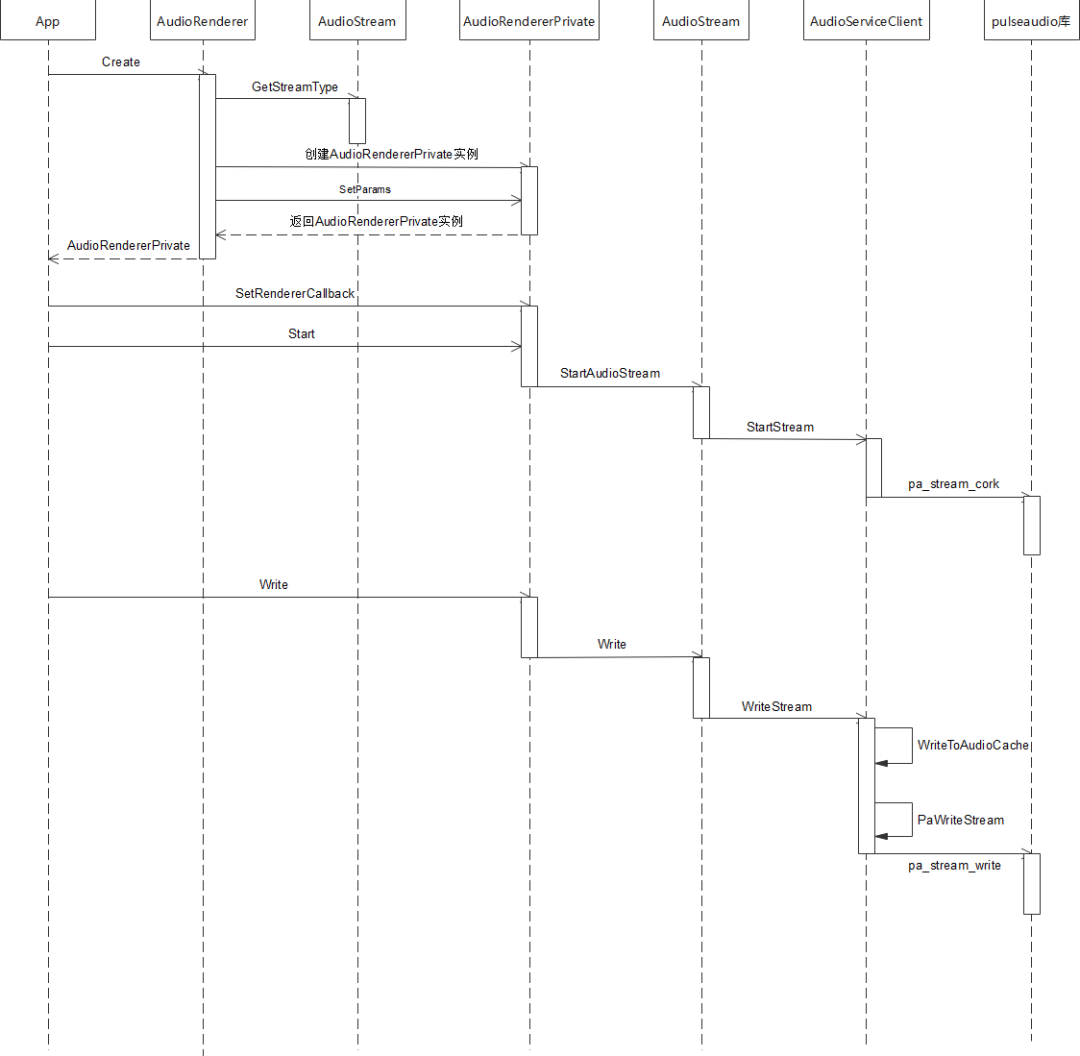

}五、调用流程

std::unique_ptr AudioRenderer::Create(const std::string cachePath,

const AudioRendererOptions &rendererOptions, const AppInfo &appInfo)

{

ContentType contentType = rendererOptions.rendererInfo.contentType;

StreamUsage streamUsage = rendererOptions.rendererInfo.streamUsage;

AudioStreamType audioStreamType = AudioStream::GetStreamType(contentType, streamUsage);

auto audioRenderer = std::make_unique(audioStreamType, appInfo);

if (!cachePath.empty()) {

AUDIO_DEBUG_LOG("Set application cache path");

audioRenderer->SetApplicationCachePath(cachePath);

}

audioRenderer->rendererInfo_.contentType = contentType;

audioRenderer->rendererInfo_.streamUsage = streamUsage;

audioRenderer->rendererInfo_.rendererFlags = rendererOptions.rendererInfo.rendererFlags;

AudioRendererParams params;

params.sampleFormat = rendererOptions.streamInfo.format;

params.sampleRate = rendererOptions.streamInfo.samplingRate;

params.channelCount = rendererOptions.streamInfo.channels;

params.encodingType = rendererOptions.streamInfo.encoding;

if (audioRenderer->SetParams(params) != SUCCESS) {

AUDIO_ERR_LOG("SetParams failed in renderer");

audioRenderer = nullptr;

return nullptr;

}

return audioRenderer;

}int32_t AudioRendererPrivate::SetRendererCallback(const std::shared_ptr &callback)

{

RendererState state = GetStatus();

if (state == RENDERER_NEW || state == RENDERER_RELEASED) {

return ERR_ILLEGAL_STATE;

}

if (callback == nullptr) {

return ERR_INVALID_PARAM;

}

// Save reference for interrupt callback

if (audioInterruptCallback_ == nullptr) {

return ERROR;

}

std::shared_ptr cbInterrupt =

std::static_pointer_cast(audioInterruptCallback_);

cbInterrupt->SaveCallback(callback);

// Save and Set reference for stream callback. Order is important here.

if (audioStreamCallback_ == nullptr) {

audioStreamCallback_ = std::make_shared();

if (audioStreamCallback_ == nullptr) {

return ERROR;

}

}

std::shared_ptr cbStream =

std::static_pointer_cast(audioStreamCallback_);

cbStream->SaveCallback(callback);

(void)audioStream_->SetStreamCallback(audioStreamCallback_);

return SUCCESS;

}bool AudioRendererPrivate::Start(StateChangeCmdType cmdType) const

{

AUDIO_INFO_LOG("AudioRenderer::Start");

RendererState state = GetStatus();

AudioInterrupt audioInterrupt;

switch (mode_) {

case InterruptMode:

audioInterrupt = sharedInterrupt_;

break;

case InterruptMode:

audioInterrupt = audioInterrupt_;

break;

default:

break;

}

AUDIO_INFO_LOG("AudioRenderer: %{public}d, streamType: %{public}d, sessionID: %{public}d",

mode_, audioInterrupt.streamType, audioInterrupt.sessionID);

if (audioInterrupt.streamType == STREAM_DEFAULT || audioInterrupt.sessionID == INVALID_SESSION_ID) {

return false;

}

int32_t ret = AudioPolicyManager::GetInstance().ActivateAudioInterrupt(audioInterrupt);

if (ret != 0) {

AUDIO_ERR_LOG("AudioRendererPrivate::ActivateAudioInterrupt Failed");

return false;

}

return audioStream_->StartAudioStream(cmdType);

}bool AudioStream::StartAudioStream(StateChangeCmdType cmdType)

{

int32_t ret = StartStream(cmdType);

resetTime_ = true;

int32_t retCode = clock_gettime(CLOCK_MONOTONIC, &baseTimestamp_);

if (renderMode_ == RENDER_MODE_CALLBACK) {

isReadyToWrite_ = true;

writeThread_ = std::make_unique<std::thread>(&AudioStream::WriteCbTheadLoop, this);

} else if (captureMode_ == CAPTURE_MODE_CALLBACK) {

isReadyToRead_ = true;

readThread_ = std::make_unique<std::thread>(&AudioStream::ReadCbThreadLoop, this);

}

isFirstRead_ = true;

isFirstWrite_ = true;

state_ = RUNNING;

AUDIO_INFO_LOG("StartAudioStream SUCCESS");

if (audioStreamTracker_) {

AUDIO_DEBUG_LOG("AudioStream:Calling Update tracker for Running");

audioStreamTracker_->UpdateTracker(sessionId_, state_, rendererInfo_, capturerInfo_);

}

return true;

}int32_t AudioServiceClient::StartStream(StateChangeCmdType cmdType)

{

int error;

lock_guard lockdata(dataMutex);

pa_operation *operation = nullptr;

pa_threaded_mainloop_lock(mainLoop);

pa_stream_state_t state = pa_stream_get_state(paStream);

streamCmdStatus = 0;

stateChangeCmdType_ = cmdType;

operation = pa_stream_cork(paStream, 0, PAStreamStartSuccessCb, (void *)this);

while (pa_operation_get_state(operation) == PA_OPERATION_RUNNING) {

pa_threaded_mainloop_wait(mainLoop);

}

pa_operation_unref(operation);

pa_threaded_mainloop_unlock(mainLoop);

if (!streamCmdStatus) {

AUDIO_ERR_LOG("Stream Start Failed");

ResetPAAudioClient();

return AUDIO_CLIENT_START_STREAM_ERR;

} else {

AUDIO_INFO_LOG("Stream Started Successfully");

return AUDIO_CLIENT_SUCCESS;

}

}int32_t AudioRendererPrivate::Write(uint8_t *buffer, size_t bufferSize)

{

return audioStream_->Write(buffer, bufferSize);

}size_t AudioStream::Write(uint8_t *buffer, size_t buffer_size)

{

int32_t writeError;

StreamBuffer stream;

stream.buffer = buffer;

stream.bufferLen = buffer_size;

isWriteInProgress_ = true;

if (isFirstWrite_) {

if (RenderPrebuf(stream.bufferLen)) {

return ERR_WRITE_FAILED;

}

isFirstWrite_ = false;

}

size_t bytesWritten = WriteStream(stream, writeError);

isWriteInProgress_ = false;

if (writeError != 0) {

AUDIO_ERR_LOG("WriteStream fail,writeError:%{public}d", writeError);

return ERR_WRITE_FAILED;

}

return bytesWritten;

}size_t AudioServiceClient::WriteStream(const StreamBuffer &stream, int32_t &pError)

{

size_t cachedLen = WriteToAudioCache(stream);

if (!acache.isFull) {

pError = error;

return cachedLen;

}

pa_threaded_mainloop_lock(mainLoop);

const uint8_t *buffer = acache.buffer.get();

size_t length = acache.totalCacheSize;

error = PaWriteStream(buffer, length);

acache.readIndex += acache.totalCacheSize;

acache.isFull = false;

if (!error && (length >= 0) && !acache.isFull) {

uint8_t *cacheBuffer = acache.buffer.get();

uint32_t offset = acache.readIndex;

uint32_t size = (acache.writeIndex - acache.readIndex);

if (size > 0) {

if (memcpy_s(cacheBuffer, acache.totalCacheSize, cacheBuffer + offset, size)) {

AUDIO_ERR_LOG("Update cache failed");

pa_threaded_mainloop_unlock(mainLoop);

pError = AUDIO_CLIENT_WRITE_STREAM_ERR;

return cachedLen;

}

AUDIO_INFO_LOG("rearranging the audio cache");

}

acache.readIndex = 0;

acache.writeIndex = 0;

if (cachedLen < stream.bufferLen) {

StreamBuffer str;

str.buffer = stream.buffer + cachedLen;

str.bufferLen = stream.bufferLen - cachedLen;

AUDIO_DEBUG_LOG("writing pending data to audio cache: %{public}d", str.bufferLen);

cachedLen += WriteToAudioCache(str);

}

}

pa_threaded_mainloop_unlock(mainLoop);

pError = error;

return cachedLen;

}六、总结

原文标题:OpenHarmony 3.2 Beta Audio——音频渲染

文章出处:【微信公众号:OpenAtom OpenHarmony】欢迎添加关注!文章转载请注明出处。

声明:本文内容及配图由入驻作者撰写或者入驻合作网站授权转载。文章观点仅代表作者本人,不代表电子发烧友网立场。文章及其配图仅供工程师学习之用,如有内容侵权或者其他违规问题,请联系本站处理。

举报投诉

-

鸿蒙

+关注

关注

60文章

3017浏览量

46170 -

OpenHarmony

+关注

关注

33文章

3974浏览量

21351

原文标题:OpenHarmony 3.2 Beta Audio——音频渲染

文章出处:【微信号:gh_e4f28cfa3159,微信公众号:OpenAtom OpenHarmony】欢迎添加关注!文章转载请注明出处。

发布评论请先 登录

相关推荐

热点推荐

LE Audio 无线音频技术解析:BIS/CIS 双模式与落地模组

无线音频仍在快速升级,LE Audio 作为面向低功耗蓝牙的新一代音频体系,把同步音频承载在等时(Isochronous)链路上,并普遍搭配 LC3 编解码,在更低码率、更长续航的前提

通过对数字音频信号进行数学运算和算法处理的高性能Audio DSP-DU562

高性能 Audio DSP(音频数字信号处理器)的核心工作原理是通过对数字音频信号进行数学运算和算法处理,实现音质优化、噪声抑制、空间增强等效果。

蓝牙5.3 经典音频 + LE Audio,一颗模块兼顾两种生态

用蓝牙连手机听歌、打电话,大家早已习以为常;近几年 LE Audio 冒头,多设备同步、低延迟、广播音频成了新卖点。问题来了:经典蓝牙生态一时半会退不了,LE Audio 又确实香,选谁?——其实

LE Audio融合BLE双模重塑蓝牙音频生态的革命性技术

在蓝牙技术诞生后的第28个年头,一场由LE Audio(Low Energy Audio,低功耗音频)引发的音频技术革命正在席卷全球。这项由蓝牙技术联盟(Bluetooth SIG)于

LE Audio蓝牙模块方案:重塑无线音频新体验

在无线音频技术日新月异的今天,蓝牙模块作为连接设备的核心组件,其性能与功能直接决定了用户体验的优劣。近期,基于LE Audio标准的新一代蓝牙模块方案横空出世,以其卓越的技术特性和广泛的应用场

探索 AURIX™ 音频应用套件:硬件设计与网络音频应用剖析

的 AURIX™ 音频应用套件(Audio Application Kit)为音频开发者提供了强大的工具。本文将深入剖析该套件的硬件设计和网络音频应用,带领大家了解其特点和技术细节。

MERUS™ EVAL_AUDIO_MA12070_B 和 EVAL_AUDIO_MA12070P_B评估板使用指南

MERUS™ EVAL_AUDIO_MA12070_B 和 EVAL_AUDIO_MA12070P_B评估板使用指南 一、前言 在音频放大器设计领域,一款性能优良的评估板能为工程师们节省大量的时间

EVAL_AUDIO_MA2304xNS_B评估板使用指南:音频放大器设计的得力助手

EVAL_AUDIO_MA2304xNS_B评估板使用指南:音频放大器设计的得力助手 作为电子工程师,在音频放大器设计领域不断探索时,一款优质的评估板能为我们的工作带来极大便利。今天就来详细介绍一下

蓝牙模块低功耗革命:LE Audio多通道音频技术详解(TWS同步/家庭影院/VR音效)

一、引言 随着科技的飞速发展,蓝牙技术作为无线传输的佼佼者,已经深入到我们的日常生活中。从最初的数据传输,到后来的音频传输,再到如今的蓝牙LE Audio(低功耗音频)技术的问世,蓝牙不断刷新着我们

蓝牙模块低功耗新突破:LE Audio技术详解(LC3编解码/多设备串流/广播音频)

Audio是蓝牙技术联盟(SIG)在2020年推出的全新音频技术标准,以低功耗蓝牙5.2为基础,采用ISOC(isochronous)架构,引入了创新的LC3音频编码算法,具有更低的延迟和更高的传输质量,同时

请问STM32如何移植Audio框架?

最近在学习音频解码,想用一下Audio框架。

1、这个该如何移植到自己创建的BSP并对接到device框架中?看了官方移植文档没有对没有对该部分的描述。

2、我只想实现一个简单的播放功能,只用一个DAC芯片(比如CS4344)是否就能达到我的需求?

发表于 09-25 07:17

XR空间音频革命:苹果、三星推出新技术,ASAF成Vision Pro最佳搭档

Audio)格式:Apple Spatial Audio Format(ASAF,苹果空间音频格式),可以用来打造真正沉浸式的音频体验。 ASAF 通过确保使用声学提示来

LE-Audio是什么?

近年来,随着蓝牙技术的快速发展,无线通信领域的应用变得越来越广泛。然而,在对音频质量和功耗不断追求的同时,蓝牙技术也需要不断创新和改进。在这方面,LE-Audio(低功耗音频)作为一项新兴技术

发表于 06-28 21:32

开源鸿蒙6.0Beta1版本发布!触觉智能将率先适配RK3566/RK3568/RK3576等芯片平台芯片

开放原子开源鸿蒙(OpenAtomOpenHarmony,简称“开源鸿蒙”或“OpenHarmony”)6.0Beta1版本正式发布。相比5.1.0Release版本进一步增强ArkUI组件能力

蓝牙LE Audio技术简介和优势分析

蓝牙LE Audio,也称为低功耗音频(Bluetooth Low Energy Audio),是蓝牙技术家族中的最新成员,专门为音频传输而设计。它继承了蓝牙低功耗(Bluetooth

OpenHarmony 3.2 Beta Audio——音频渲染

OpenHarmony 3.2 Beta Audio——音频渲染

评论