前言

代码仓库地址:https://github.com/Oneflow-Inc/one-yolov5欢迎star one-yolov5项目 获取最新的动态。如果您有问题,欢迎在仓库给我们提出宝贵的意见。如果对您有帮助,欢迎来给我Star呀~

源码解读: train.py 本文涉及到了大量的超链接,但是在微信文章里面外链接会被吃掉 ,所以欢迎大家到这里查看本篇文章的完整版本。

这个文件是yolov5的训练脚本。总体代码流程:

准备工作: 数据 + 模型 + 学习率 + 优化器

训练过程:

一个训练过程(不包括数据准备),会轮询多次训练集,每次称为一个epoch,每个epoch又分为多个batch来训练。流程先后拆解成:

开始训练

训练一个epoch前

训练一个batch前

训练一个batch后

训练一个epoch后。

评估验证集

结束训练

1. 导入需要的包和基本配置

importargparse#解析命令行参数模块 importmath#数学公式模块 importos#与操作系统进行交互的模块包含文件路径操作和解析 importrandom#生成随机数的模块 importsys#sys系统模块包含了与Python解释器和它的环境有关的函数 importtime#时间模块更底层 fromcopyimportdeepcopy#深拷贝模块 fromdatetimeimportdatetime#基本日期和时间类型模块 frompathlibimportPath#Path模块将str转换为Path对象使字符串路径易于操作 importnumpyasnp#numpy数组操作模块 importoneflowasflow#OneFlow深度学习框架 importoneflow.distributedasdist#分布式训练模块 importoneflow.nnasnn#对oneflow.nn.functional的类的封装有很多和oneflow.nn.functional相同的函数 importyaml#操作yaml文件模块 fromoneflow.optimimportlr_scheduler#学习率模块 fromtqdmimporttqdm#进度条模块 importval#导入val.py,forend-of-epochmAP frommodels.experimentalimportattempt_load#导入在线下载模块 frommodels.yoloimportModel#导入YOLOv5的模型定义 fromutils.autoanchorimportcheck_anchors#导入检查anchors合法性的函数 #Callbackshttps://start.oneflow.org/oneflow-yolo-doc/source_code_interpretation/callbacks_py.html fromutils.callbacksimportCallbacks#和日志相关的回调函数 #dataloadershttps://github.com/Oneflow-Inc/oneflow-yolo-doc/blob/master/docs/source_code_interpretation/utils/dataladers_py.md fromutils.dataloadersimportcreate_dataloader#加载数据集的函数 #downloadshttps://github.com/Oneflow-Inc/oneflow-yolo-doc/blob/master/docs/source_code_interpretation/utils/downloads_py.md fromutils.downloadsimportis_url#判断当前字符串是否是链接 #generalhttps://github.com/Oneflow-Inc/oneflow-yolo-doc/blob/master/docs/source_code_interpretation/utils/general_py.md fromutils.generalimportcheck_img_size#check_suffix, fromutils.generalimport( LOGGER, check_dataset, check_file, check_git_status, check_requirements, check_yaml, colorstr, get_latest_run, increment_path, init_seeds, intersect_dicts, labels_to_class_weights, labels_to_image_weights, methods, one_cycle, print_args, print_mutation, strip_optimizer, yaml_save, model_save, ) fromutils.loggersimportLoggers#导入日志管理模块 fromutils.loggers.wandb.wandb_utilsimportcheck_wandb_resume fromutils.lossimportComputeLoss#导入计算Loss的模块 #在YOLOv5中,fitness函数实现对[P,R,mAP@.5,mAP@.5-.95]指标进行加权 fromutils.metricsimportfitness fromutils.oneflow_utilsimportEarlyStopping,ModelEMA,de_parallel,select_device,smart_DDP,smart_optimizer,smart_resume#导入早停机制模块,模型滑动平均更新模块,解分布式模块,智能选择设备,智能优化器以及智能断点续训模块等 fromutils.plotsimportplot_evolve,plot_labels #LOCAL_RANK:当前进程对应的GPU号。 LOCAL_RANK=int(os.getenv("LOCAL_RANK",-1))#https://pytorch.org/docs/stable/elastic/run.html #RANK:当前进程的序号,用于进程间通讯,rank=0的主机为master节点。 RANK=int(os.getenv("RANK",-1)) #WORLD_SIZE:总的进程数量(原则上第一个process占用一个GPU是较优的)。 WORLD_SIZE=int(os.getenv("WORLD_SIZE",1)) #Linux下: #FILE='path/to/one-yolov5/train.py' #将'path/to/one-yolov5'加入系统的环境变量该脚本结束后失效。 FILE=Path(__file__).resolve() ROOT=FILE.parents[0]#YOLOv5rootdirectory ifstr(ROOT)notinsys.path: sys.path.append(str(ROOT))#addROOTtoPATH ROOT=Path(os.path.relpath(ROOT,Path.cwd()))#relative

2. parse_opt 函数

这个函数用于设置opt参数

weights:权重文件 cfg:模型配置文件包括nc、depth_multiple、width_multiple、anchors、backbone、head等 data:数据集配置文件包括path、train、val、test、nc、names、download等 hyp:初始超参文件 epochs:训练轮次 batch-size:训练批次大小 img-size:输入网络的图片分辨率大小 resume:断点续训,从上次打断的训练结果处接着训练默认False nosave:不保存模型默认False(保存)True:onlytestfinalepoch notest:是否只测试最后一轮默认FalseTrue:只测试最后一轮False:每轮训练完都测试mAP workers:dataloader中的最大work数(线程个数) device:训练的设备 single-cls:数据集是否只有一个类别默认False rect:训练集是否采用矩形训练默认False可以参考:https://start.oneflow.org/oneflow-yolo-doc/tutorials/05_chapter/rectangular_reasoning.html noautoanchor:不自动调整anchor默认False(自动调整anchor) evolve:是否进行超参进化默认False multi-scale:是否使用多尺度训练默认False label-smoothing:标签平滑增强默认0.0不增强要增强一般就设为0.1 adam:是否使用adam优化器默认False(使用SGD) sync-bn:是否使用跨卡同步BN操作,在DDP中使用默认False linear-lr:是否使用linearlr线性学习率默认False使用cosinelr cache-image:是否提前缓存图片到内存cache,以加速训练默认False image-weights:是否使用图片加权选择策略(selectionimgtotrainingbyclassweights)默认False不使用 bucket:谷歌云盘bucket一般用不到 project:训练结果保存的根目录默认是runs/train name:训练结果保存的目录默认是exp最终:runs/train/exp exist-ok:如果文件存在就ok不存在就新建或incrementname默认False(默认文件都是不存在的) quad:dataloader取数据时,是否使用collate_fn4代替collate_fn默认False save_period:Logmodelafterevery"save_period"epoch,默认-1不需要logmodel信息 artifact_alias:whichversionofdatasetartifacttobestripped默认lastest貌似没用到这个参数? local_rank:当前进程对应的GPU号。-1且gpu=1时不进行分布式 entity:wandbentity默认None upload_dataset:是否上传dataset到wandbtabel(将数据集作为交互式dsviz表在浏览器中查看、查询、筛选和分析数据集)默认False bbox_interval:设置带边界框图像记录间隔Setbounding-boximageloggingintervalforW&B默认-1opt.epochs//10 bbox_iou_optim:这个参数代表启用oneflow针对bbox_iou部分的优化,使得训练速度更快

更多细节请点这

3 main函数

3.1 Checks

defmain(opt,callbacks=Callbacks()):

#Checks

ifRANKin{-1,0}:

#输出所有训练opt参数train:...

print_args(vars(opt))

#检查代码版本是否是最新的github:...

check_git_status()

#检查requirements.txt所需包是否都满足requirements:...

check_requirements(exclude=["thop"])

3.2 Resume

判断是否使用断点续训resume, 读取参数

使用断点续训 就从path/to/last模型文件夹中读取相关参数;不使用断点续训 就从文件中读取相关参数

#2、判断是否使用断点续训resume,读取参数 ifopt.resumeandnot(check_wandb_resume(opt)oropt.evolve):#resumefromspecifiedormostrecentlast #使用断点续训就从last模型文件夹中读取相关参数 #如果resume是str,则表示传入的是模型的路径地址 #如果resume是True,则通过get_lastest_run()函数找到runs文件夹中最近的权重文件last last=Path(check_file(opt.resume)ifisinstance(opt.resume,str)elseget_latest_run()) opt_yaml=last.parent.parent/"opt.yaml"#trainoptionsyaml opt_data=opt.data#originaldataset ifopt_yaml.is_file(): #相关的opt参数也要替换成last中的opt参数 withopen(opt_yaml,errors="ignore")asf: d=yaml.safe_load(f) else: d=flow.load(last,map_location="cpu")["opt"] opt=argparse.Namespace(**d)#replace opt.cfg,opt.weights,opt.resume="",str(last),True#reinstate ifis_url(opt_data): opt.data=check_file(opt_data)#avoidHUBresumeauthtimeout else: #不使用断点续训就从文件中读取相关参数 #opt.hyp=opt.hypor('hyp.finetune.yaml'ifopt.weightselse'hyp.scratch.yaml') opt.data,opt.cfg,opt.hyp,opt.weights,opt.project=( check_file(opt.data), check_yaml(opt.cfg), check_yaml(opt.hyp), str(opt.weights), str(opt.project), )#checks assertlen(opt.cfg)orlen(opt.weights),"either--cfgor--weightsmustbespecified" ifopt.evolve: ifopt.project==str(ROOT/"runs/train"):#ifdefaultprojectname,renametoruns/evolve opt.project=str(ROOT/"runs/evolve") opt.exist_ok,opt.resume=( opt.resume, False, )#passresumetoexist_okanddisableresume ifopt.name=="cfg": opt.name=Path(opt.cfg).stem#usemodel.yamlasname #根据opt.project生成目录如:runs/train/exp18 opt.save_dir=str(increment_path(Path(opt.project)/opt.name,exist_ok=opt.exist_ok))

3.3 DDP mode

DDP mode设置

#3、DDP模式的设置

"""select_device

select_device函数:设置当前脚本的device:cpu或者cuda。

并且当且仅当使用cuda时并且有多块gpu时可以使用ddp模式,否则抛出报错信息。batch_size需要整除总的进程数量。

另外DDP模式不支持AutoBatch功能,使用DDP模式必须手动指定batchsize。

"""

device=select_device(opt.device,batch_size=opt.batch_size)

ifLOCAL_RANK!=-1:

msg="isnotcompatiblewithYOLOv5Multi-GPUDDPtraining"

assertnotopt.image_weights,f"--image-weights{msg}"

assertnotopt.evolve,f"--evolve{msg}"

assertopt.batch_size!=-1,f"AutoBatchwith--batch-size-1{msg},pleasepassavalid--batch-size"

assertopt.batch_size%WORLD_SIZE==0,f"--batch-size{opt.batch_size}mustbemultipleofWORLD_SIZE"

assertflow.cuda.device_count()>LOCAL_RANK,"insufficientCUDAdevicesforDDPcommand"

flow.cuda.set_device(LOCAL_RANK)

device=flow.device("cuda",LOCAL_RANK)

3.4Train

不使用进化算法 正常Train

#Train ifnotopt.evolve: #如果不进行超参进化那么就直接调用train()函数,开始训练 train(opt.hyp,opt,device,callbacks)

3.5 Evolve hyperparameters (optional)

遗传进化算法,先进化出最佳超参后训练

#否则使用超参进化算法(遗传算法)求出最佳超参再进行训练

else:

#Hyperparameterevolutionmetadata(mutationscale0-1,lower_limit,upper_limit)

#超参进化列表(突变规模,最小值,最大值)

meta={

"lr0":(1,1e-5,1e-1),#initiallearningrate(SGD=1E-2,Adam=1E-3)

"lrf":(1,0.01,1.0),#finalOneCycleLRlearningrate(lr0*lrf)

"momentum":(0.3,0.6,0.98),#SGDmomentum/Adambeta1

"weight_decay":(1,0.0,0.001),#optimizerweightdecay

"warmup_epochs":(1,0.0,5.0),#warmupepochs(fractionsok)

"warmup_momentum":(1,0.0,0.95),#warmupinitialmomentum

"warmup_bias_lr":(1,0.0,0.2),#warmupinitialbiaslr

"box":(1,0.02,0.2),#boxlossgain

"cls":(1,0.2,4.0),#clslossgain

"cls_pw":(1,0.5,2.0),#clsBCELosspositive_weight

"obj":(1,0.2,4.0),#objlossgain(scalewithpixels)

"obj_pw":(1,0.5,2.0),#objBCELosspositive_weight

"iou_t":(0,0.1,0.7),#IoUtrainingthreshold

"anchor_t":(1,2.0,8.0),#anchor-multiplethreshold

"anchors":(2,2.0,10.0),#anchorsperoutputgrid(0toignore)

"fl_gamma":(0,0.0,2.0),#focallossgamma(efficientDetdefaultgamma=1.5)

"hsv_h":(1,0.0,0.1),#imageHSV-Hueaugmentation(fraction)

"hsv_s":(1,0.0,0.9),#imageHSV-Saturationaugmentation(fraction)

"hsv_v":(1,0.0,0.9),#imageHSV-Valueaugmentation(fraction)

"degrees":(1,0.0,45.0),#imagerotation(+/-deg)

"translate":(1,0.0,0.9),#imagetranslation(+/-fraction)

"scale":(1,0.0,0.9),#imagescale(+/-gain)

"shear":(1,0.0,10.0),#imageshear(+/-deg)

"perspective":(0,0.0,0.001),#imageperspective(+/-fraction),range0-0.001

"flipud":(1,0.0,1.0),#imageflipup-down(probability)

"fliplr":(0,0.0,1.0),#imageflipleft-right(probability)

"mosaic":(1,0.0,1.0),#imagemixup(probability)

"mixup":(1,0.0,1.0),#imagemixup(probability)

"copy_paste":(1,0.0,1.0),

}#segmentcopy-paste(probability)

withopen(opt.hyp,errors="ignore")asf:#载入初始超参

hyp=yaml.safe_load(f)#loadhypsdict

if"anchors"notinhyp:#anchorscommentedinhyp.yaml

hyp["anchors"]=3

opt.noval,opt.nosave,save_dir=(

True,

True,

Path(opt.save_dir),

)#onlyval/savefinalepoch

#ei=[isinstance(x,(int,float))forxinhyp.values()]#evolvableindices

#evolve_yaml超参进化后文件保存地址

evolve_yaml,evolve_csv=save_dir/"hyp_evolve.yaml",save_dir/"evolve.csv"

ifopt.bucket:

os.system(f"gsutilcpgs://{opt.bucket}/evolve.csv{evolve_csv}")#downloadevolve.csvifexists

"""

使用遗传算法进行参数进化默认是进化300代

这里的进化算法原理为:根据之前训练时的hyp来确定一个basehyp再进行突变,具体是通过之前每次进化得到的results来确定之前每个hyp的权重,有了每个hyp和每个hyp的权重之后有两种进化方式;

1.根据每个hyp的权重随机选择一个之前的hyp作为basehyp,random.choices(range(n),weights=w)

2.根据每个hyp的权重对之前所有的hyp进行融合获得一个basehyp,(x*w.reshape(n,1)).sum(0)/w.sum()

evolve.txt会记录每次进化之后的results+hyp

每次进化时,hyp会根据之前的results进行从大到小的排序;

再根据fitness函数计算之前每次进化得到的hyp的权重

(其中fitness是我们寻求最大化的值。在YOLOv5中,fitness函数实现对[P,R,mAP@.5,mAP@.5-.95]指标进行加权。)

再确定哪一种进化方式,从而进行进化。

这部分代码其实不是很重要并且也比较难理解,大家如果没有特殊必要的话可以忽略,因为正常训练也不会用到超参数进化。

"""

for_inrange(opt.evolve):#generationstoevolve

ifevolve_csv.exists():#ifevolve.csvexists:selectbesthypsandmutate

#Selectparent(s)

parent="single"#parentselectionmethod:'single'or'weighted'

x=np.loadtxt(evolve_csv,ndmin=2,delimiter=",",skiprows=1)

n=min(5,len(x))#numberofpreviousresultstoconsider

#fitness是我们寻求最大化的值。在YOLOv5中,fitness函数实现对[P,R,mAP@.5,mAP@.5-.95]指标进行加权

x=x[np.argsort(-fitness(x))][:n]#topnmutations

w=fitness(x)-fitness(x).min()+1e-6#weights(sum>0)

ifparent=="single"orlen(x)==1:

#x=x[random.randint(0,n-1)]#randomselection

x=x[random.choices(range(n),weights=w)[0]]#weightedselection

elifparent=="weighted":

x=(x*w.reshape(n,1)).sum(0)/w.sum()#weightedcombination

#Mutate

mp,s=0.8,0.2#mutationprobability,sigma

npr=np.random

npr.seed(int(time.time()))

g=np.array([meta[k][0]forkinhyp.keys()])#gains0-1

ng=len(meta)

v=np.ones(ng)

whileall(v==1):#mutateuntilachangeoccurs(preventduplicates)

v=(g*(npr.random(ng)< mp) * npr.randn(ng) * npr.random() * s + 1).clip(0.3, 3.0)

for i, k in enumerate(hyp.keys()): # plt.hist(v.ravel(), 300)

hyp[k] = float(x[i + 7] * v[i]) # mutate

# Constrain to limits

for k, v in meta.items():

hyp[k] = max(hyp[k], v[1]) # lower limit

hyp[k] = min(hyp[k], v[2]) # upper limit

hyp[k] = round(hyp[k], 5) # significant digits

# Train mutation

results = train(hyp.copy(), opt, device, callbacks)

# callbacks https://start.oneflow.org/oneflow-yolo-doc/source_code_interpretation/callbacks_py.html

callbacks = Callbacks()

# Write mutation results

print_mutation(results, hyp.copy(), save_dir, opt.bucket)

# Plot results

plot_evolve(evolve_csv)

LOGGER.info(f"Hyperparameter evolution finished {opt.evolve} generations

" f"Results saved to {colorstr('bold', save_dir)}

" f"Usage example: $ python train.py --hyp {evolve_yaml}")

4 def train(hyp, opt, device, callbacks):

4.1 载入参数

""" :paramshyp:data/hyps/hyp.scratch.yamlhypdictionary :paramsopt:main中opt参数 :paramsdevice:当前设备 :paramscallbacks:和日志相关的回调函数https://start.oneflow.org/oneflow-yolo-doc/source_code_interpretation/callbacks_py.html """ deftrain(hyp,opt,device,callbacks):#hypispath/to/hyp.yamlorhypdictionary (save_dir,epochs,batch_size,weights,single_cls,evolve,data,cfg,resume,noval,nosave,workers,freeze,bbox_iou_optim)=( Path(opt.save_dir), opt.epochs, opt.batch_size, opt.weights, opt.single_cls, opt.evolve, opt.data, opt.cfg, opt.resume, opt.noval, opt.nosave, opt.workers, opt.freeze, opt.bbox_iou_optim, )

4.2 初始化参数和配置信息

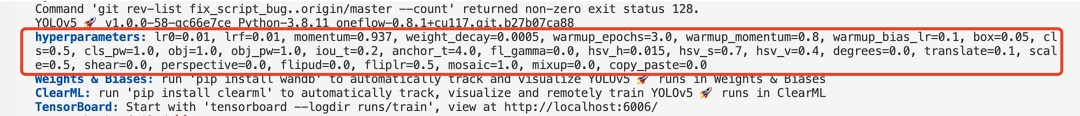

下面输出超参数的时候截图如下:

#和日志相关的回调函数,记录当前代码执行的阶段

callbacks.run("on_pretrain_routine_start")

#保存权重路径如runs/train/exp18/weights

w=save_dir/"weights"#weightsdir

(w.parentifevolveelsew).mkdir(parents=True,exist_ok=True)#makedir

last,best=w/"last",w/"best"

#Hyperparameters超参

ifisinstance(hyp,str):

withopen(hyp,errors="ignore")asf:

#loadhypsdict加载超参信息

hyp=yaml.safe_load(f)#loadhypsdict

#日志输出超参信息hyperparameters:...

LOGGER.info(colorstr("hyperparameters:")+",".join(f"{k}={v}"fork,vinhyp.items()))

opt.hyp=hyp.copy()#forsavinghypstocheckpoints

#保存运行时的参数配置

ifnotevolve:

yaml_save(save_dir/"hyp.yaml",hyp)

yaml_save(save_dir/"opt.yaml",vars(opt))

#Loggers

data_dict=None

ifRANKin{-1,0}:

#初始化Loggers对象

#def__init__(self,save_dir=None,weights=None,opt=None,hyp=None,logger=None,include=LOGGERS):

loggers=Loggers(save_dir,weights,opt,hyp,LOGGER)#loggersinstance

#Registeractions

forkinmethods(loggers):#注册钩子https://github.com/Oneflow-Inc/one-yolov5/blob/main/utils/callbacks.py

callbacks.register_action(k,callback=getattr(loggers,k))

#Config

#是否需要画图:所有的labels信息、迭代的epochs、训练结果等

plots=notevolveandnotopt.noplots#createplots

cuda=device.type!="cpu"

#初始化随机数种子

init_seeds(opt.seed+1+RANK,deterministic=True)

data_dict=data_dictorcheck_dataset(data)#checkifNone

train_path,val_path=data_dict["train"],data_dict["val"]

#nc:numberofclasses数据集有多少种类别

nc=1ifsingle_clselseint(data_dict["nc"])#numberofclasses

#如果只有一个类别并且data_dict里没有names这个key的话,我们将names设置为["item"]代表目标

names=["item"]ifsingle_clsandlen(data_dict["names"])!=1elsedata_dict["names"]#classnames

assertlen(names)==nc,f"{len(names)}namesfoundfornc={nc}datasetin{data}"#check

#当前数据集是否是coco数据集(80个类别)

is_coco=isinstance(val_path,str)andval_path.endswith("coco/val2017.txt")#COCOdataset

4.3 model

#检查权重命名合法性: #合法:pretrained=True; #不合法:pretrained=False; pretrained=check_wights(weights) #载入模型 ifpretrained: #使用预训练 #---------------------------------------------------------# #加载模型及参数 ckpt=flow.load(weights,map_location="cpu")#loadcheckpointtoCPUtoavoidCUDAmemoryleak #这里加载模型有两种方式,一种是通过opt.cfg另一种是通过ckpt['model'].yaml #区别在于是否使用resume如果使用resume会将opt.cfg设为空,按照ckpt['model'].yaml来创建模型 #这也影响了下面是否除去anchor的key(也就是不加载anchor),如果resume则不加载anchor #原因:保存的模型会保存anchors,有时候用户自定义了anchor之后,再resume,则原来基于coco数据集的anchor会自己覆盖自己设定的anchor #详情参考:https://github.com/ultralytics/yolov5/issues/459 #所以下面设置intersect_dicts()就是忽略exclude model=Model(cfgorckpt["model"].yaml,ch=3,nc=nc,anchors=hyp.get("anchors")).to(device)#create exclude=["anchor"]if(cfgorhyp.get("anchors"))andnotresumeelse[]#excludekeys csd=ckpt["model"].float().state_dict()#checkpointstate_dictasFP32 #筛选字典中的键值对把exclude删除 csd=intersect_dicts(csd,model.state_dict(),exclude=exclude)#intersect #载入模型权重 model.load_state_dict(csd,strict=False)#load LOGGER.info(f"Transferred{len(csd)}/{len(model.state_dict())}itemsfrom{weights}")#report else: #不使用预训练 model=Model(cfg,ch=3,nc=nc,anchors=hyp.get("anchors")).to(device)#create #注意一下:one-yolov5的amp训练还在开发调试中,暂时关闭,后续支持后打开。但half的推理目前我们是支持的 #amp=check_amp(model)#checkAMP amp=False #Freeze #冻结权重层 #这里只是给了冻结权重层的一个例子,但是作者并不建议冻结权重层,训练全部层参数,可以得到更好的性能,不过也会更慢 freeze=[f"model.{x}."forxin(freezeiflen(freeze)>1elserange(freeze[0]))]#layerstofreeze fork,vinmodel.named_parameters(): v.requires_grad=True#trainalllayers #NaNto0(commentedforerratictrainingresults) #v.register_hook(lambdax:torch.nan_to_num(x)) ifany(xinkforxinfreeze): LOGGER.info(f"freezing{k}") v.requires_grad=False

4.4 Optimizer

选择优化器

#Optimizer nbs=64#nominalbatchsize accumulate=max(round(nbs/batch_size),1)#accumulatelossbeforeoptimizing hyp["weight_decay"]*=batch_size*accumulate/nbs#scaleweight_decay optimizer=smart_optimizer(model,opt.optimizer,hyp["lr0"],hyp["momentum"],hyp["weight_decay"])

4.5 学习率

#Scheduler ifopt.cos_lr: #使用onecycle学习率https://arxiv.org/pdf/1803.09820.pdf lf=one_cycle(1,hyp["lrf"],epochs)#cosine1->hyp['lrf'] else: #使用线性学习率 deff(x): return(1-x/epochs)*(1.0-hyp["lrf"])+hyp["lrf"] lf=f#linear #实例化scheduler scheduler=lr_scheduler.LambdaLR(optimizer,lr_lambda=lf)#plot_lr_scheduler(optimizer,scheduler,epochs)

4.6 EMA

单卡训练: 使用EMA(指数移动平均)对模型的参数做平均, 一种给予近期数据更高权重的平均方法, 以求提高测试指标并增加模型鲁棒。

#EMA

ema=ModelEMA(model)ifRANKin{-1,0}elseNone

4.7 Resume

断点续训

#Resume best_fitness,start_epoch=0.0,0 ifpretrained: ifresume: best_fitness,start_epoch,epochs=smart_resume(ckpt,optimizer,ema,weights,epochs,resume) delckpt,csd

4.8 SyncBatchNorm

SyncBatchNorm可以提高多gpu训练的准确性,但会显著降低训练速度。它仅适用于多GPU DistributedDataParallel 训练。

#SyncBatchNorm

ifopt.sync_bnandcudaandRANK!=-1:

model=flow.nn.SyncBatchNorm.convert_sync_batchnorm(model).to(device)

LOGGER.info("UsingSyncBatchNorm()")

4.9 数据加载

#Trainloaderhttps://start.oneflow.org/oneflow-yolo-doc/source_code_interpretation/utils/dataladers_py.html

train_loader,dataset=create_dataloader(

train_path,

imgsz,

batch_size//WORLD_SIZE,

gs,

single_cls,

hyp=hyp,

augment=True,

cache=Noneifopt.cache=="val"elseopt.cache,

rect=opt.rect,

rank=LOCAL_RANK,

workers=workers,

image_weights=opt.image_weights,

quad=opt.quad,

prefix=colorstr("train:"),

shuffle=True,

)

labels=np.concatenate(dataset.labels,0)

#获取标签中最大类别值,与类别数作比较,如果大于等于类别数则表示有问题

mlc=int(labels[:,0].max())#maxlabelclass

assertmlc< nc, f"Label class {mlc} exceeds nc={nc} in {data}. Possible class labels are 0-{nc - 1}"

# Process 0

if RANK in {-1, 0}:

val_loader = create_dataloader(

val_path,

imgsz,

batch_size // WORLD_SIZE * 2,

gs,

single_cls,

hyp=hyp,

cache=None if noval else opt.cache,

rect=True,

rank=-1,

workers=workers * 2,

pad=0.5,

prefix=colorstr("val: "),

)[0]

# 如果不使用断点续训

if not resume:

if plots:

plot_labels(labels, names, save_dir)

# Anchors

# 计算默认锚框anchor与数据集标签框的高宽比

# 标签的高h宽w与anchor的高h_a宽h_b的比值 即h/h_a, w/w_a都要在(1/hyp['anchor_t'], hyp['anchor_t'])是可以接受的

# 如果bpr小于98%,则根据k-mean算法聚类新的锚框

if not opt.noautoanchor:

# check_anchors : 这个函数是通过计算bpr确定是否需要改变anchors 需要就调用k-means重新计算anchors。

# bpr(best possible recall): 最多能被召回的ground truth框数量 / 所有ground truth框数量 最大值为1 越大越好

# 小于0.98就需要使用k-means + 遗传进化算法选择出与数据集更匹配的anchor boxes框。

check_anchors(dataset, model=model, thr=hyp["anchor_t"], imgsz=imgsz)

model.half().float() # pre-reduce anchor precision

callbacks.run("on_pretrain_routine_end")

4.10 DDP mode

#DDPmode ifcudaandRANK!=-1: model=smart_DDP(model)

4.11 附加model attributes

#Modelattributes nl=de_parallel(model).model[-1].nl#numberofdetectionlayers(toscalehyps) hyp["box"]*=3/nl#scaletolayers hyp["cls"]*=nc/80*3/nl#scaletoclassesandlayers hyp["obj"]*=(imgsz/640)**2*3/nl#scaletoimagesizeandlayers hyp["label_smoothing"]=opt.label_smoothing model.nc=nc#attachnumberofclassestomodel model.hyp=hyp#attachhyperparameterstomodel #从训练样本标签得到类别权重(和类别中的目标数即类别频率成反比) model.class_weights=labels_to_class_weights(dataset.labels,nc).to(device)*nc#attachclassweights model.names=names#获取类别名

4.12 Start training

#Starttraining

t0=time.time()

nb=len(train_loader)#numberofbatches

#获取预热迭代的次数iterations#numberofwarmupiterations,max(3epochs,1kiterations)

nw=max(round(hyp["warmup_epochs"]*nb),100)#numberofwarmupiterations,max(3epochs,100iterations)

#nw=min(nw,(epochs-start_epoch)/2*nb)#limitwarmupto< 1/2 of training

last_opt_step = -1

# 初始化maps(每个类别的map)和results

maps = np.zeros(nc) # mAP per class

results = (0, 0, 0, 0, 0, 0, 0) # P, R, mAP@.5, mAP@.5-.95, val_loss(box, obj, cls)

# 设置学习率衰减所进行到的轮次,即使打断训练,使用resume接着训练也能正常衔接之前的训练进行学习率衰减

scheduler.last_epoch = start_epoch - 1 # do not move

# scaler = flow.cuda.amp.GradScaler(enabled=amp) 这个是和amp相关的loss缩放模块,后续one-yolv5支持好amp训练后会打开

stopper, _ = EarlyStopping(patience=opt.patience), False

# 初始化损失函数

# 这里的bbox_iou_optim是one-yolov5扩展的一个参数,可以启用更快的bbox_iou函数,模型训练速度比PyTorch更快。

compute_loss = ComputeLoss(model, bbox_iou_optim=bbox_iou_optim) # init loss class

callbacks.run("on_train_start")

# 打印日志信息

LOGGER.info(

f"Image sizes {imgsz} train, {imgsz} val

"

f"Using {train_loader.num_workers * WORLD_SIZE} dataloader workers

"

f"Logging results to {colorstr('bold', save_dir)}

"

f"Starting training for {epochs} epochs..."

)

for epoch in range(start_epoch, epochs): # epoch ------------------------------------------------------------------

callbacks.run("on_train_epoch_start")

model.train()

# Update image weights (optional, single-GPU only)

# Update image weights (optional) 并不一定好 默认是False的

# 如果为True 进行图片采样策略(按数据集各类别权重采样)

if opt.image_weights:

# 根据前面初始化的图片采样权重model.class_weights(每个类别的权重 频率高的权重小)以及maps配合每张图片包含的类别数

# 通过rando.choices生成图片索引indices从而进行采用 (作者自己写的采样策略,效果不一定ok)

cw = model.class_weights.cpu().numpy() * (1 - maps) ** 2 / nc # class weights

# labels_to_image_weights: 这个函数是利用每张图片真实gt框的真实标签labels和开始训练前通过 labels_to_class_weights函数

# 得到的每个类别的权重得到数据集中每张图片对应的权重。

# https://github.com/Oneflow-Inc/oneflow-yolo-doc/blob/master/docs/source_code_interpretation/utils/general_py.md#192-labels_to_image_weights

iw = labels_to_image_weights(dataset.labels, nc=nc, class_weights=cw) # image weights

dataset.indices = random.choices(range(dataset.n), weights=iw, k=dataset.n) # rand weighted idx

# 初始化训练时打印的平均损失信息

mloss = flow.zeros(3, device=device) # mean losses

if RANK != -1:

# DDP模式打乱数据,并且ddp.sampler的随机采样数据是基于epoch+seed作为随机种子,每次epoch不同,随机种子不同

train_loader.sampler.set_epoch(epoch)

# 进度条,方便展示信息

pbar = enumerate(train_loader)

LOGGER.info(('

' + '%11s' * 7) % ('Epoch', 'GPU_mem', 'box_loss', 'obj_loss', 'cls_loss', 'Instances', 'Size'))

if RANK in {-1, 0}:

# 创建进度条

pbar = tqdm(pbar, total=nb, bar_format="{l_bar}{bar:10}{r_bar}{bar:-10b}") # progress bar

# 梯度清零

optimizer.zero_grad()

for i, (

imgs,

targets,

paths,

_,

) in pbar: # batch -------------------------------------------------------------

callbacks.run("on_train_batch_start")

# ni: 计算当前迭代次数 iteration

ni = i + nb * epoch # number integrated batches (since train start)

imgs = imgs.to(device).float() / 255 # uint8 to float32, 0-255 to 0.0-1.0

# Warmup

# 预热训练(前nw次迭代)热身训练迭代的次数iteration范围[1:nw] 选取较小的accumulate,学习率以及momentum,慢慢的训练

if ni <= nw:

xi = [0, nw] # x interp

# compute_loss.gr = np.interp(ni, xi, [0.0, 1.0]) # iou loss ratio (obj_loss = 1.0 or iou)

accumulate = max(1, np.interp(ni, xi, [1, nbs / batch_size]).round())

for j, x in enumerate(optimizer.param_groups):

# bias lr falls from 0.1 to lr0, all other lrs rise from 0.0 to lr0

x["lr"] = np.interp(

ni,

xi,

[hyp["warmup_bias_lr"] if j == 0 else 0.0, x["initial_lr"] * lf(epoch)],

)

if "momentum" in x:

x["momentum"] = np.interp(ni, xi, [hyp["warmup_momentum"], hyp["momentum"]])

# Multi-scale 默认关闭

# Multi-scale 多尺度训练 从[imgsz*0.5, imgsz*1.5+gs]间随机选取一个尺寸(32的倍数)作为当前batch的尺寸送入模型开始训练

# imgsz: 默认训练尺寸 gs: 模型最大stride=32 [32 16 8]

if opt.multi_scale:

sz = random.randrange(imgsz * 0.5, imgsz * 1.5 + gs) // gs * gs # size

sf = sz / max(imgs.shape[2:]) # scale factor

if sf != 1:

ns = [math.ceil(x * sf / gs) * gs for x in imgs.shape[2:]] # new shape (stretched to gs-multiple)

# 下采样

imgs = nn.functional.interpolate(imgs, size=ns, mode="bilinear", align_corners=False)

# Forward

pred = model(imgs) # forward

loss, loss_items = compute_loss(pred, targets.to(device)) # loss scaled by batch_size

if RANK != -1:

loss *= WORLD_SIZE # gradient averaged between devices in DDP mode

if opt.quad:

loss *= 4.0

# Backward

# scaler.scale(loss).backward()

# Backward 反向传播

loss.backward()

# Optimize - https://pytorch.org/docs/master/notes/amp_examples.html

# 模型反向传播accumulate次(iterations)后再根据累计的梯度更新一次参数

if ni - last_opt_step >=accumulate:

#optimizer.step参数更新

optimizer.step()

#梯度清零

optimizer.zero_grad()

ifema:

#当前epoch训练结束更新ema

ema.update(model)

last_opt_step=ni

#Log

#打印Print一些信息包括当前epoch、显存、损失(box、obj、cls、total)、当前batch的target的数量和图片的size等信息

ifRANKin{-1,0}:

mloss=(mloss*i+loss_items)/(i+1)#updatemeanlosses

pbar.set_description(("%11s"+"%11.4g"*5)%(f"{epoch}/{epochs-1}",*mloss,targets.shape[0],imgs.shape[-1]))

#endbatch----------------------------------------------------------------

#Scheduler

lr=[x["lr"]forxinoptimizer.param_groups]#forloggers

scheduler.step()

ifRANKin{-1,0}:

#mAP

callbacks.run("on_train_epoch_end",epoch=epoch)

ema.update_attr(model,include=["yaml","nc","hyp","names","stride","class_weights"])

final_epoch=(epoch+1==epochs)orstopper.possible_stop

ifnotnovalorfinal_epoch:#CalculatemAP

#测试使用的是ema(指数移动平均对模型的参数做平均)的模型

#results:[1]Precision所有类别的平均precision(最大f1时)

#[1]Recall所有类别的平均recall

#[1]map@0.5所有类别的平均mAP@0.5

#[1]map@0.5:0.95所有类别的平均mAP@0.5:0.95

#[1]box_loss验证集回归损失,obj_loss验证集置信度损失,cls_loss验证集分类损失

#maps:[80]记录每一个类别的ap值

results,maps,_=val.run(

data_dict,

batch_size=batch_size//WORLD_SIZE*2,

imgsz=imgsz,

half=amp,

model=ema.ema,

single_cls=single_cls,

dataloader=val_loader,

save_dir=save_dir,

plots=False,

callbacks=callbacks,

compute_loss=compute_loss,

)

#UpdatebestmAP

#fi是我们寻求最大化的值。在YOLOv5中,fitness函数实现对[P,R,mAP@.5,mAP@.5-.95]指标进行加权。

fi=fitness(np.array(results).reshape(1,-1))#weightedcombinationof[P,R,mAP@.5,mAP@.5-.95]

#stop=stopper(epoch=epoch,fitness=fi)#earlystopcheck

iffi>best_fitness:

best_fitness=fi

log_vals=list(mloss)+list(results)+lr

callbacks.run("on_fit_epoch_end",log_vals,epoch,best_fitness,fi)

#Savemodel

if(notnosave)or(final_epochandnotevolve):#ifsave

ckpt={

"epoch":epoch,

"best_fitness":best_fitness,

"model":deepcopy(de_parallel(model)).half(),

"ema":deepcopy(ema.ema).half(),

"updates":ema.updates,

"optimizer":optimizer.state_dict(),

"wandb_id":loggers.wandb.wandb_run.idifloggers.wandbelseNone,

"opt":vars(opt),

"date":datetime.now().isoformat(),

}

#Savelast,bestanddelete

model_save(ckpt,last)#flow.save(ckpt,last)

ifbest_fitness==fi:

model_save(ckpt,best)#flow.save(ckpt,best)

ifopt.save_period>0andepoch%opt.save_period==0:

print("isok")

model_save(ckpt,w/f"epoch{epoch}")#flow.save(ckpt,w/f"epoch{epoch}")

delckpt

#Write将测试结果写入result.txt中

callbacks.run("on_model_save",last,epoch,final_epoch,best_fitness,fi)

#endepoch--------------------------------------------------------------------------

#endtraining---------------------------------------------------------------------------

4.13 End

打印一些信息

日志: 打印训练时间、plots可视化训练结果results1.png、confusion_matrix.png 以及(‘F1’, ‘PR’, ‘P’, ‘R’)曲线变化 、日志信息

通过调用val.run() 方法验证在 coco数据集上 模型准确性 + 释放显存

Validate a model's accuracy on COCO val or test-dev datasets. Note that pycocotools metrics may be ~1% better than the equivalent repo metrics, as is visible below, due to slight differences in mAP computation.

ifRANKin{-1,0}:

LOGGER.info(f"

{epoch-start_epoch+1}epochscompletedin{(time.time()-t0)/3600:.3f}hours")

forfinlast,best:

iff.exists():

strip_optimizer(f)#stripoptimizers

iffisbest:

LOGGER.info(f"

Validating{f}...")

results,_,_=val.run(

data_dict,

batch_size=batch_size//WORLD_SIZE*2,

imgsz=imgsz,

model=attempt_load(f,device).half(),

iou_thres=0.65ifis_cocoelse0.60,#bestpycocotoolsresultsat0.65

single_cls=single_cls,

dataloader=val_loader,

save_dir=save_dir,

save_json=is_coco,

verbose=True,

plots=plots,

callbacks=callbacks,

compute_loss=compute_loss,

)#valbestmodelwithplots

callbacks.run("on_train_end",last,best,plots,epoch,results)

flow.cuda.empty_cache()

return

5 run函数

封装train接口 支持函数调用执行这个train.py脚本

defrun(**kwargs): #Usage:importtrain;train.run(data='coco128.yaml',imgsz=320,weights='yolov5m') opt=parse_opt(True) fork,vinkwargs.items(): setattr(opt,k,v)#给opt添加属性 main(opt) returnopt

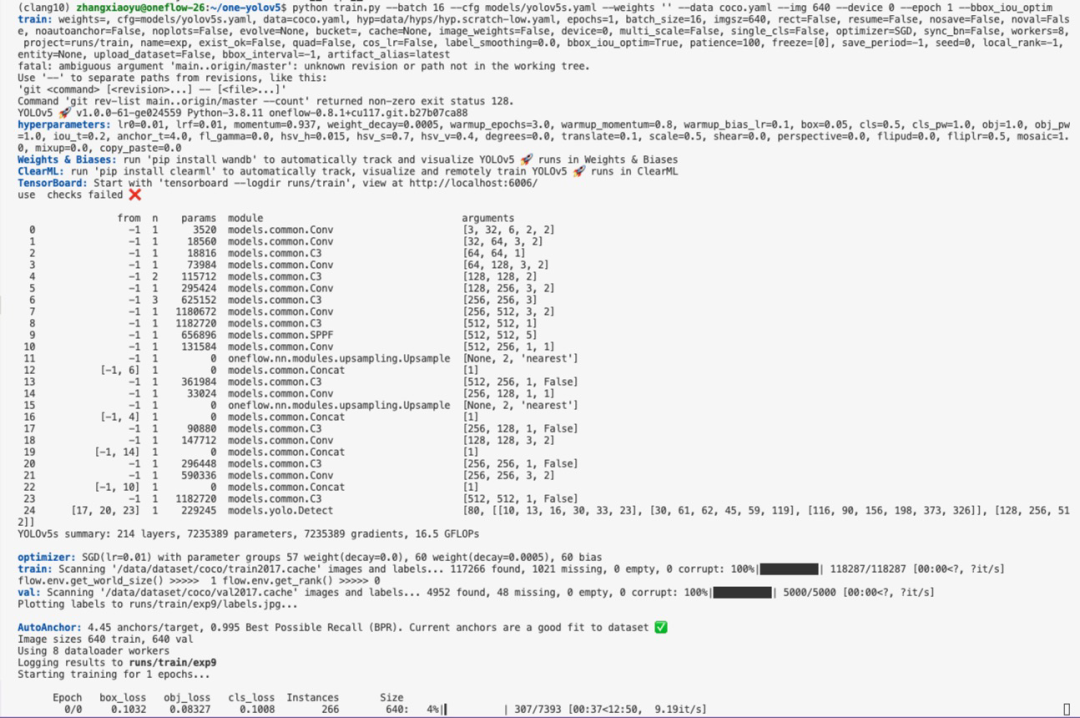

6 启动训练时效果展示

-

代码

+关注

关注

30文章

4983浏览量

74540 -

Batch

+关注

关注

0文章

6浏览量

7419 -

脚本

+关注

关注

1文章

413浏览量

29319

原文标题:《YOLOv5全面解析教程》九,train.py 逐代码解析

文章出处:【微信号:GiantPandaCV,微信公众号:GiantPandaCV】欢迎添加关注!文章转载请注明出处。

发布评论请先 登录

【YOLOv5】LabVIEW+YOLOv5快速实现实时物体识别(Object Detection)含源码

在K230上部署yolov5时 出现the array is too big的原因?

龙哥手把手教你学视觉-深度学习YOLOV5篇

YOLOv5网络结构解析

YOLOv5全面解析教程之目标检测模型精确度评估

使用Yolov5 - i.MX8MP进行NPU错误检测是什么原因?

如何YOLOv5测试代码?

基于YOLOv5的目标检测文档进行的时候出错如何解决?

YOLOv5全面解析教程:计算mAP用到的numpy函数详解

YOLOv5解析之downloads.py 代码示例

YOLOv8+OpenCV实现DM码定位检测与解析

yolov5和YOLOX正负样本分配策略

YOLOv5全面解析教程:train.py逐代码解析

YOLOv5全面解析教程:train.py逐代码解析

评论