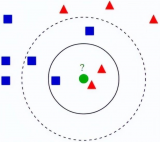

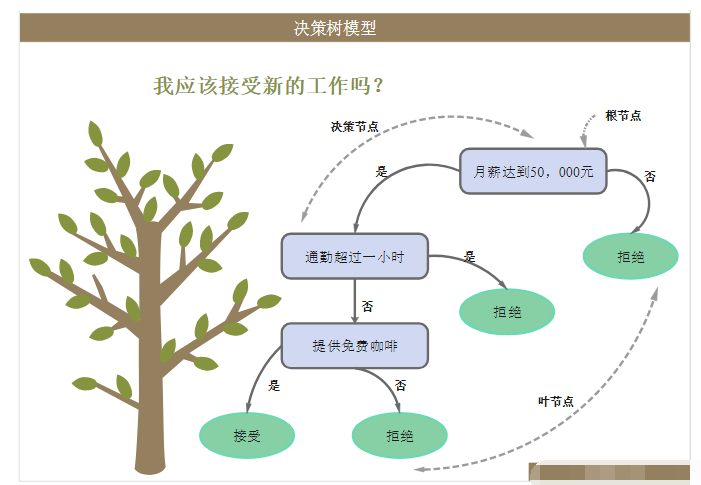

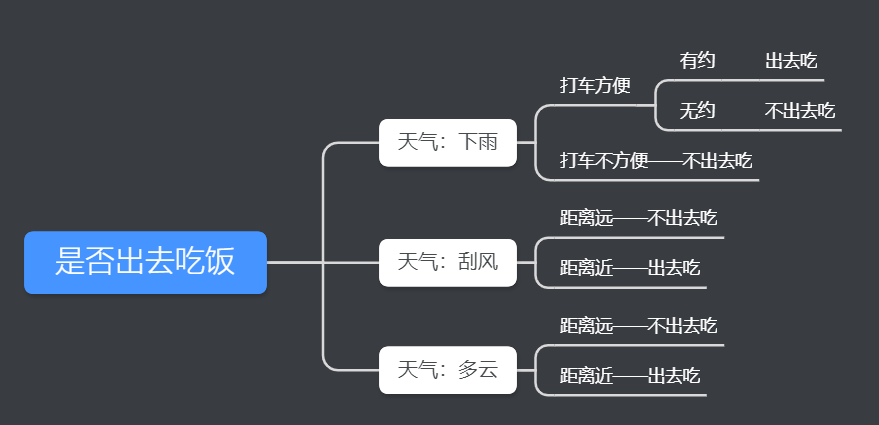

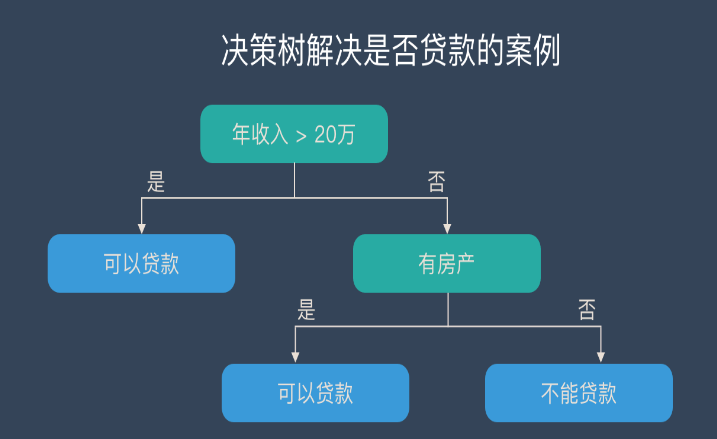

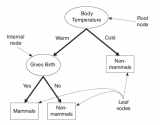

今天,我们介绍机器学习里比较常用的一种分类算法,决策树。决策树是对人类认知识别的一种模拟,给你一堆看似杂乱无章的数据,如何用尽可能少的特征,对这些数据进行有效的分类。

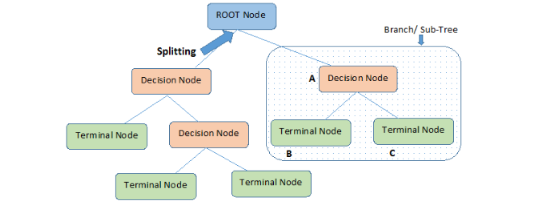

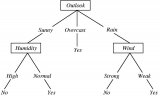

决策树借助了一种层级分类的概念,每一次都选择一个区分性最好的特征进行分类,对于可以直接给出标签 label 的数据,可能最初选择的几个特征就能很好地进行区分,有些数据可能需要更多的特征,所以决策树的深度也就表示了你需要选择的几种特征。

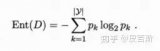

在进行特征选择的时候,常常需要借助信息论的概念,利用最大熵原则。

决策树一般是用来对离散数据进行分类的,对于连续数据,可以事先对其离散化。

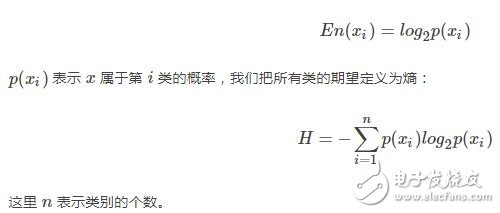

在介绍决策树之前,我们先简单的介绍一下信息熵,我们知道,熵的定义为:

我们先构造一些简单的数据:

from sklearn import datasets

import numpy as np

import matplotlib.pyplot as plt

import math

import operator

def Create_data():

dataset = [[1,1,‘yes’],

[1, 1,‘yes’],

[1, 0, ‘no’],

[0, 1, ‘no’],

[0, 1, ‘no’],

[3, 0, ‘maybe’]]

feat_name = [‘no surfacing’, ‘flippers’]

return dataset, feat_name

然后定义一个计算熵的函数:

def Cal_entrpy(dataset):

n_sample = len(dataset)

n_label = {}

for featvec in dataset:

current_label = featvec[-1]

if current_label not in n_label.keys():

n_label[current_label] = 0

n_label[current_label] += 1

shannonEnt = 0.0

for key in n_label:

prob = float(n_label[key]) / n_sample

shannonEnt -= prob * math.log(prob, 2)

return shannonEnt

要注意的是,熵越大,说明数据的类别越分散,越呈现某种无序的状态。

下面再定义一个拆分数据集的函数:

def Split_dataset(dataset, axis, value):

retDataSet = []

for featVec in dataset:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1 :])

retDataSet.append(reducedFeatVec)

return retDataSet

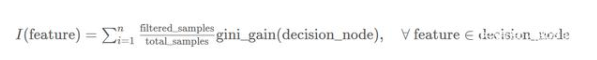

结合前面的几个函数,我们可以构造一个特征选择的函数:

def Choose_feature(dataset):

num_sample = len(dataset)

num_feature = len(dataset[0]) - 1

baseEntrpy = Cal_entrpy(dataset)

best_Infogain = 0.0

bestFeat = -1

for i in range (num_feature):

featlist = [example[i] for example in dataset]

uniquValus = set(featlist)

newEntrpy = 0.0

for value in uniquValus:

subData = Split_dataset(dataset, i, value)

prob = len(subData) / float(num_sample)

newEntrpy += prob * Cal_entrpy(subData)

info_gain = baseEntrpy - newEntrpy

if (info_gain 》 best_Infogain):

best_Infogain = info_gain

bestFeat = i

return bestFeat

然后再构造一个投票及计票的函数

def Major_cnt(classlist):

class_num = {}

for vote in classlist:

if vote not in class_num.keys():

class_num[vote] = 0

class_num[vote] += 1

Sort_K = sorted(class_num.iteritems(),

key = operator.itemgetter(1), reverse=True)

return Sort_K[0][0]

有了这些,就可以构造我们需要的决策树了:

def Create_tree(dataset, featName):

classlist = [example[-1] for example in dataset]

if classlist.count(classlist[0]) == len(classlist):

return classlist[0]

if len(dataset[0]) == 1:

return Major_cnt(classlist)

bestFeat = Choose_feature(dataset)

bestFeatName = featName[bestFeat]

myTree = {bestFeatName: {}}

del(featName[bestFeat])

featValues = [example[bestFeat] for example in dataset]

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = featName[:]

myTree[bestFeatName][value] = Create_tree(Split_dataset

(dataset, bestFeat, value), subLabels)

return myTree

def Get_numleafs(myTree):

numLeafs = 0

firstStr = myTree.keys()[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == ‘dict’ :

numLeafs += Get_numleafs(secondDict[key])

else:

numLeafs += 1

return numLeafs

def Get_treedepth(myTree):

max_depth = 0

firstStr = myTree.keys()[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == ‘dict’ :

this_depth = 1 + Get_treedepth(secondDict[key])

else:

this_depth = 1

if this_depth 》 max_depth:

max_depth = this_depth

return max_depth

电子发烧友App

电子发烧友App

评论